Meta Ads Creative Testing: The Framework for Finding Winning Ads Faster

A practical Meta Ads creative testing framework for 2026: how to structure tests, measure fatigue, scale winners, and avoid false positives.

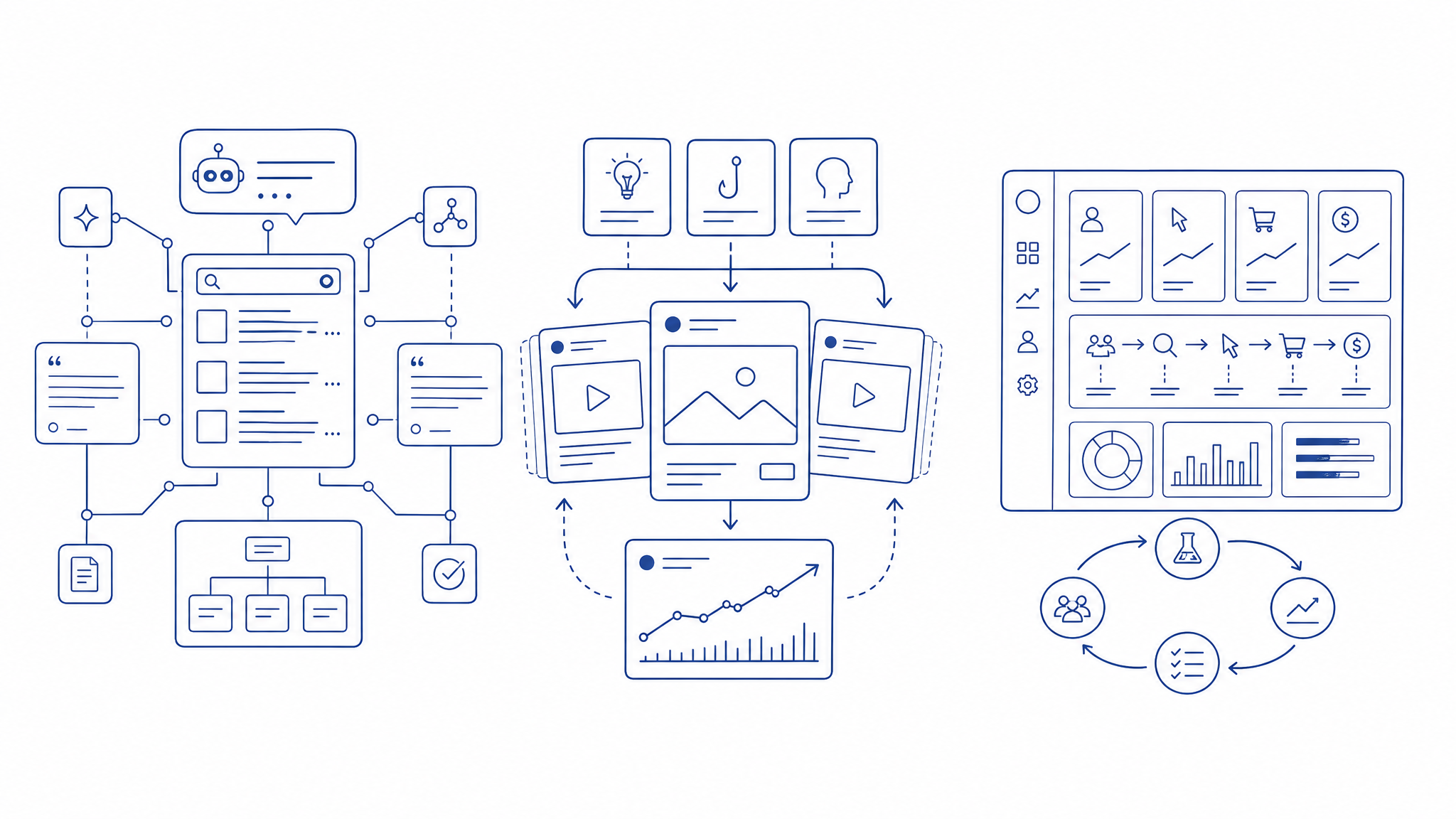

Meta Ads performance in 2026 is mostly a creative problem. Targeting has become broader, campaign structures have become simpler, and algorithmic delivery handles much of the optimization that media buyers used to do manually. The lever that still compounds is creative: angles, hooks, formats, offers, landing page fit, and the speed at which you learn.

But most creative testing programs are messy. Teams launch too many random variations, judge winners too early, and confuse platform-reported ROAS with real incremental demand. A good testing system does the opposite: it isolates variables, learns quickly, and turns creative performance into a repeatable operating rhythm.

Why Creative Testing Matters More Now

Meta’s delivery system is extremely good at finding pockets of response inside broad audiences. That is useful, but it also means the algorithm can only work with the creative inputs you provide. If every ad says the same thing in slightly different words, you are not testing creative. You are testing formatting.

The best Meta accounts have creative diversity across four dimensions:

- Angle: the reason someone should care

- Format: the way the idea is packaged

- Offer: the commercial promise or incentive

- Audience maturity: whether the ad speaks to cold, warm, or high-intent buyers

Creative testing exists to discover which combinations create profitable attention.

The Creative Testing Hierarchy

Not all tests are equal. A button color test is not the same as a positioning test. If you test low-impact variables too early, you waste budget and pollute the learning loop.

Use this hierarchy.

Level 1: Strategic angles

This is the highest-leverage layer. What core belief, pain, desire, or problem does the ad speak to?

Examples:

- Save time

- Reduce wasted spend

- Avoid embarrassment

- Grow revenue faster

- Beat a competitor

- Make a complex process simpler

- Feel more in control

If an angle works, many executions can work. If the angle is weak, better editing will not save it.

Level 2: Offers and proof

Once an angle has traction, test the promise. Do users respond better to a free audit, a calculator, a demo, a benchmark report, a limited-time offer, or a direct product pitch?

Proof also matters. Testimonials, numbers, screenshots, founder explanations, UGC-style demonstrations, and case-study snippets can all change conversion quality.

Level 3: Formats

Format determines how quickly the idea lands. On Meta, strong formats include:

- founder-style direct-to-camera video

- customer problem/solution stories

- static comparison graphics

- before/after demonstrations

- product walkthroughs

- listicle-style carousels

- native-looking Reels edits

Format tests should not happen in isolation. A weak angle in a trendy format is still weak.

Level 4: Hooks and openings

The first seconds matter, especially in video. But hook testing only works when the underlying concept is clear. Test hooks after you know the angle deserves more spend.

Good hooks create immediate context:

- “Most brands scale Meta Ads too late.”

- “Your CPA problem might be a landing page problem.”

- “Here is why your winning ad stopped working.”

Bad hooks create empty curiosity:

- “You won’t believe this.”

- “This one trick changed everything.”

- “Stop scrolling.”

A Practical Account Structure

For most accounts, creative testing should be simple enough to maintain every week.

A practical structure:

- Evergreen scaling campaign for proven winners

- Creative testing campaign for structured experiments

- Retargeting or warm audience campaign only if volume justifies it

Inside the testing campaign, group concepts by angle. Avoid throwing unrelated ads into the same ad set and calling it a test. If one ad wins, you need to know what won.

How Much Budget Should Testing Get?

There is no universal number, but the principle is clear: testing needs enough budget to generate a decision, not just impressions.

A useful starting point:

| Monthly Meta Spend | Testing Budget | Weekly New Concepts |

|---|---|---|

| $5k–$15k | 15–25% | 2–4 |

| $15k–$50k | 20–30% | 4–8 |

| $50k–$200k | 25–35% | 8–15 |

| $200k+ | 30%+ | 15+ |

Lower-spend accounts should test fewer, better concepts. High-spend accounts need creative volume because fatigue arrives faster.

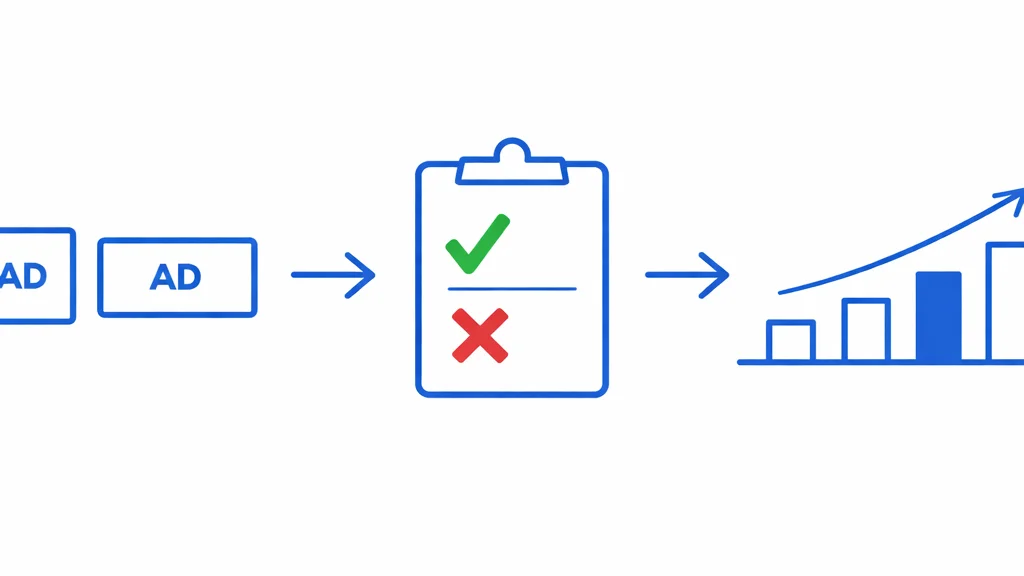

Define a Winning Ad Before You Launch

Most teams decide what “winning” means after the data comes in. That invites bias.

Before launching a test, define:

- primary KPI: CPA, ROAS, cost per lead, contribution margin, or qualified pipeline

- minimum spend or conversion threshold

- evaluation window

- acceptable tradeoffs between volume and efficiency

- whether the ad needs to win in-platform or in backend revenue

For ecommerce, a creative can look mediocre on platform ROAS but produce better new-customer quality. For B2B, a low CPL ad can produce terrible pipeline. The test should optimize for the business outcome, not the easiest metric.

A simple decision rule is better than a perfect one created too late. For purchase campaigns, avoid declaring a winner before the ad has spent at least one to two times your target CPA unless the signal is extremely strong. For lead generation, separate raw leads from qualified leads as early as possible. For high-ticket B2B, judge concepts by pipeline quality, not form fills alone.

The Creative Brief Template

Testing quality depends on briefing quality. Every new concept should answer these questions before production starts:

| Brief Element | What to Define |

|---|---|

| Angle | The pain, desire, belief, or objection the ad is testing |

| Audience maturity | Cold, problem-aware, solution-aware, warm, or retargeting |

| Promise | The outcome the ad suggests is possible |

| Proof | Testimonial, number, screenshot, demo, founder point of view, or social proof |

| Format | Static, UGC-style video, founder video, carousel, comparison graphic, demo |

| Hook | The first line or first three seconds |

| CTA | Audit, quiz, calculator, offer, demo, shop, subscribe |

| Landing page match | Where the click goes and whether the message continues |

This prevents the most common production failure: making assets before anyone knows what is actually being tested.

Avoid False Positives

Meta’s reporting can make random variance look like insight. Be careful when declaring winners.

Common false positives:

- One ad gets early conversions from a tiny sample and looks unbeatable

- Retargeting-heavy delivery inflates ROAS

- Existing customers convert and make acquisition look cheaper than it is

- A discount offer wins on volume but destroys margin

- A lead magnet drives cheap leads that sales cannot use

The fix is not perfection. The fix is discipline. Use minimum thresholds, compare cohorts, and look at backend quality when possible.

A useful testing readout should include both the platform view and the business view:

| View | Questions to Answer |

|---|---|

| Platform | Did Meta deliver spend? Which ad earned efficient conversions? What happened to CTR, CPM, CPA, and frequency? |

| Customer quality | Were buyers new or returning? Did leads become qualified? Did AOV, margin, or pipeline quality change? |

| Learning | Which angle, proof point, offer, or format seems responsible? What should be produced next? |

If you cannot explain why an ad won, treat it as a useful asset, not a reliable insight.

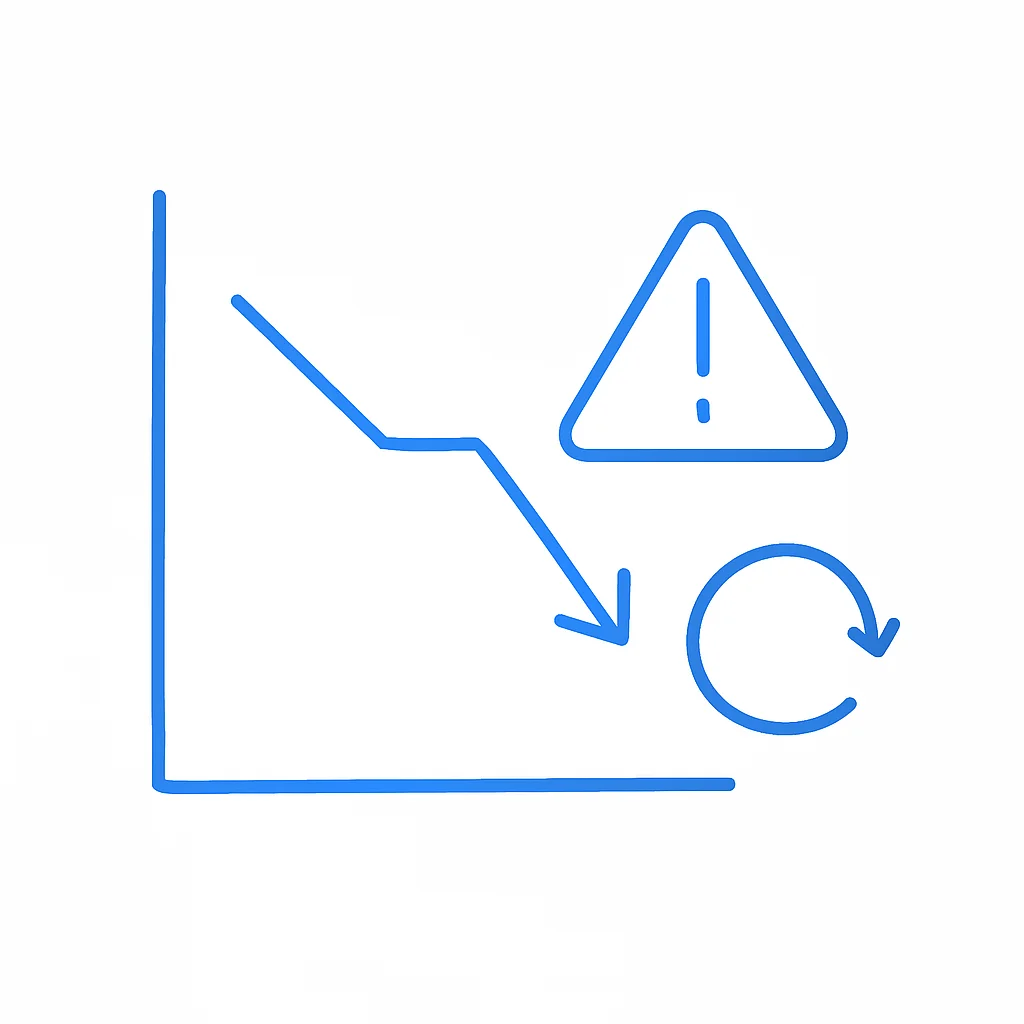

Measure Fatigue Before Performance Collapses

Creative fatigue does not appear all at once. It usually shows up as a pattern:

- Frequency rises

- CPM or CPA starts creeping up

- Click-through rate softens

- Conversion rate becomes less stable

- Spend shifts away from the ad or efficiency breaks

By the time everyone notices the ad is tired, you are already late. Build a fatigue dashboard that tracks frequency, spend concentration, CPA trend, CTR trend, and thumbstop rate for video.

Scaling Winners

When an ad wins, do not immediately make twenty tiny edits. First, scale the winner. Then create controlled variations.

High-value variations include:

- same angle, new hook

- same hook, new proof point

- same concept, different format

- same offer, different audience maturity

- same message, different landing page match

The goal is to build a creative family around the winning idea. One winning ad is useful. A winning angle with multiple executions is an asset.

Keep the first scaled version close to the original. Then expand outward in rings: new hook, new proof, new format, new offer, new landing page. If you change everything at once, you may scale spend, but you lose the learning.

The Weekly Creative Rhythm

A strong testing system runs on cadence.

Monday: review last week’s results, fatigue signals, and spend concentration.

Tuesday: select concepts for production and write briefs.

Wednesday: produce assets and build landing page/message match if needed.

Thursday: launch tests with clean naming conventions.

Friday: check delivery issues, not final results.

The following week: evaluate against predefined thresholds and move winners into scaling.

This rhythm creates compound learning. Every week, the account gets smarter.

Naming Conventions Matter

Creative testing becomes impossible if naming is sloppy. Use names that preserve the learning.

A practical naming structure:

Angle_Format_Hook_Offer_Date

Example:

CostLeak_Static_AuditYourWaste_FreeAudit_2026-05

This lets you analyze patterns later. You can answer questions like: Which angles work? Which formats scale? Which offers attract high-quality customers?

If possible, mirror the same naming in your creative brief, file names, ad names, and reporting dashboard. The unsexy operational detail is what makes pattern analysis possible three months later.

Creative Testing Is a Strategy Function

The biggest unlock is cultural. Creative testing is not just “making more ads.” It is how the business learns what the market cares about.

Winning concepts should inform:

- landing page copy

- email sequences

- sales scripts

- product positioning

- SEO content

- offer strategy

If a Meta ad angle consistently wins, that is market feedback. Do not leave it trapped inside Ads Manager.

The Bottom Line

Meta Ads performance is increasingly determined by the quality and speed of creative learning. The best advertisers do not simply produce more assets. They build systems that test meaningful differences, identify real winners, scale them carefully, and feed insights back into the business.

Creative testing is not a media buying chore. It is one of the fastest ways to understand demand.

Key Terms in This Article

CPM

Cost Per Mille (thousand impressions) – what you pay for 1,000 ad views.

CPA

Cost Per Acquisition – how much you pay to acquire one customer or conversion.

CPL

Cost Per Lead – the cost to generate one qualified lead.

CTR

Click-Through Rate – the percentage of people who click your ad after seeing it.

ROAS

Return On Ad Spend – revenue generated for every dollar spent on advertising.

KPI

Key Performance Indicator – a measurable value that shows how effectively you're achieving objectives.

Related Articles

The Performance Creative Playbook: How to Build Ads That Actually Convert in 2026

Most ads fail because of creative, not targeting. Here's the complete framework for building high-performing ad creative—from concept to execution to systematic testing—that drives conversions, not just clicks.

Creative Fatigue Detection: How to Spot It and Fix It Fast

A tactical guide to detecting ad fatigue early, setting frequency caps, and building creative refresh cycles that protect performance.

Meta Ads for E-commerce in 2026: The Complete Playbook

Everything you need to know about running profitable Meta ads for e-commerce in 2026. From Advantage+ to creative strategy to measurement.

Ready to level up your marketing?

We help companies build AI-powered marketing engines that scale. Let's talk about what's possible for your business.

Get a Quote